In recent months, I’ve begun moving some of my analytics functions to the cloud. Specifically, I’ve been moving them many of my python scripts and API’s to AWS’ Lambda platform using the Zappa framework. In this post, I’ll share some basic information about Python and AWS Lambda…hopefully it will get everyone out there thinking about new ways to use platforms like Lambda.

Before we dive into an example of what I’m moving to Lambda, let’s spend some time talking about Lambda. When I first heard about, I was a confused…but once I ‘got’ it, I saw the value. Here’s the description of Lambda from AWS’ website:

AWS Lambda lets you run code without provisioning or managing servers. You pay only for the compute time you consume – there is no charge when your code is not running. With Lambda, you can run code for virtually any type of application or backend service – all with zero administration. Just upload your code and Lambda takes care of everything required to run and scale your code with high availability. You can set up your code to automatically trigger from other AWS services or call it directly from any web or mobile app.

Once I realized how easy it is to move code to lambda to use whenever/wherever I needed it, I jumped at the opportunity. But…it took a while to get a good workflow in place to simplify deploying to lambda. I stumbled across Zappa and couldn’t be happier…it makes deploying to lambda simple (very simple).

OK. So. Why would you want to move your code to Lambda?

Lots of reasons. Here’s a few:

- Rather than host your own server to handle some API endpoints — move to Lambda

- Rather than build out a complex development environment to support your complex system, move some of that complexity to Lambda and make a call to an API endpoint.

- If you travel and want to downsize your travel laptop but still need to access your python data analytics stack move the stack to Lambda.

- If you have a script that you run very irregularly and don’t want to pay $5 a month at Digital Ocean — move it to Lambda.

There are many other more sophisticated reasons of course, but these’ll do for now.

Let’s get started looking at python and AWS Lambda. You’ll need an AWS account for this.

First – I’m going to talk a bit about building an API endpoint using Flask. You don’t have to use flask, but its an easy framework to use and you can quickly build an API endpoint with it with very little fuss. With this example, I’m going to use Lambda to host an API endpoint that uses the Newspaper library to scrape a website, pull down the text and return that text to my local script.

Writing your first Flask + Lambda API

To get started, install Flask,Flask-Restful and Zappa. You’ll want to do this in a fresh environment using virtualenv (see my previous posts about virtualenv and vagrant) because we’ll be moving this up to Lambda using Zappa.

pip install flask flask_restful zappa

Our flask driven API is going to be extremely simple and exist in less than 20 lines of code:

from flask import Flask

from newspaper import Article

from flask_restful import Resource, Api

app = Flask(__name__)

api = Api(app)

class hello(Resource):

def get(self):

return "Hello World"

api.add_resource(hello, '/hello')

if __name__ == '__main__':

app.run(debug=True, host='0.0.0.0', port=5001)

Note: The ‘host = 0.0.0.0’ and ‘port=50001’ are extranous and are how I use Flask with vagrant. If you keep this in and run it locally, you’d need to visit http://0.0.0.0:5001 to view your app.

The last thing you need to do is build your requirements.txt file for Zappa to use when building your application files to send to Lambda. For a quick/dirty requirements file, I used the following:

zappa newspaper flask flask_restful

Now…let’s get this up to lambda. With zappa, its as easy as a couple of command line instructions.

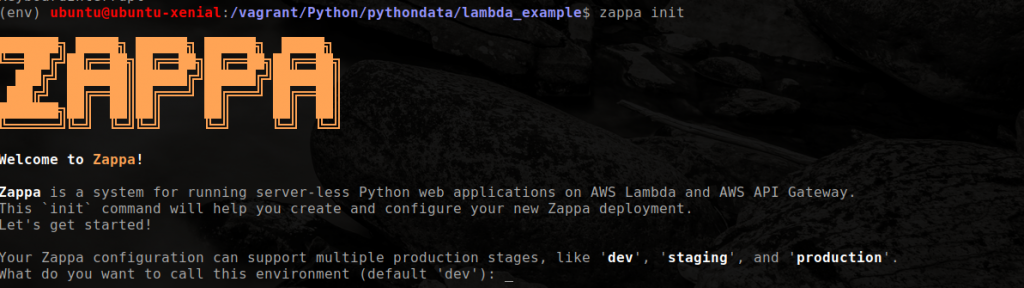

First, run the init command from the command line in your virtualenv:

zappa init

You should see something similar to this:

You’ll be asked a few questions, you can hit ‘enter’ to take the defaults or enter your own. For this eample, I used ‘dev’ for the environment name (you can set up multiple environments for dev, staging, production, etc) and made a S3 bucket for use with this application.

Zappa should realize you are working with Flask app and automatically set things up for you. It will ask you what the name of your Flask app’s main function is (in this case it is api.app). Lastly, Zappa will ask if you want to deploy to all AWS regions…I chose not to for this example. Once complete, you’ll have a zappa_settings.json file in your directory that will look something like the following:

{

"dev": {

"app_function": "api.app",

"profile_name": "default",

"s3_bucket": "DEV_BUCKET_NAME" #I removed the S3 bucket name for security purposes

}

}

I’ve found that I need to add more information to this json file before I can successfully deploy. For some reason, Zappa doesn’t add the “region” to the settings file. I also like to add the “runtime” as well. Edit your json file to read (feel free to use whatever region you want):

{

"dev": {

"app_function": "api.app",

"profile_name": "default",

"s3_bucket": "DEV_BUCKET_NAME",

"runtime": "python2.7",

"aws_region": "us-east-1"

}

}

Now…you are ready to deploy. You can do that with the following command:

zappa deploy dev

Zappa will set up all the necessary configurations and systems on AWS AND zip up your libraries and code and push it to Lambda. I’ve not found another framework as easy to use as Zappa when it comes to deploying…if you know of one feel free to leave a comment.

After a minute or two, you should see a “Deployment Complete: …” message that includes the endpoint for your new API. In this case, Zappa built the following endpoint for me:

https://4wq2muonbb.execute-api.us-east-1.amazonaws.com/dev

If you make some changes to your code and need to update Lambda, Zappa makes it easy to do that with the following command:

zappa update dev

Additionally, if you want to add a ‘production’ lambda environment, all you need to do is add that new environment to your settings json file and deploy it. For this example, our settings file would change to:

{

"dev": {

"app_function": "api.app",

"profile_name": "default",

"s3_bucket": "DEV_BUCKET_NAME",

"runtime": "python2.7",

"aws_region": "us-east-1"

}.

"prod": {

"app_function": "api.app",

"profile_name": "default",

"s3_bucket": "PROD_BUCKET_NAME",

"runtime": "python2.7",

"aws_region": "us-east-1"

}

}

Next, do a deploy prod and your production environment is ready to go at a new endpoint.

zappa deploy prod

Interfacing with the API

Our code is pushed to Lambda and ready to start accepting requests. In this example’s case, all we are doing is returning “hello world” but you can see the power in this for other functionality. To check out the results, just open a browser and enter your Zappa Deployment URL and append /hello to the end of it like this:

https://4wq2muonbb.execute-api.us-east-1.amazonaws.com/dev/hello

You should see the standard “Hello World” response in your browser window.

You can find the code for the lambda api.py function here.

Note: At some point, I’ll pull this endpoint down…but will leave it up for a bit for users to play around with.

The post Python and AWS Lambda – A match made in heaven appeared first on Python Data.