I recently gave a presentation at Venture Cafe describing how I see automation changing python, machine-learning workflows in the near future. In this post, I highlight the presentation’s main points. You can find the slides here. From Ray Kurzweil’s excitement about a technological singularity to Elon Musk’s warnings about an A.I. Apocalypse, automated machine-learning evokes […]

Author: Dan Vatterott

Python Aggregate UDFs in Pyspark

Pyspark has a great set of aggregate functions (e.g., count, countDistinct, min, max, avg, sum), but these are not enough for all cases (particularly if you’re trying to avoid costly Shuffle operations). Pyspark currently has pandas_udfs, which can create custom aggregators, but you can only “apply” one pandas_udf at a time. If you want to […]

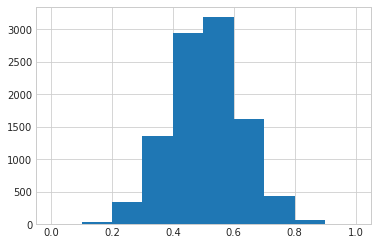

Regression of a Proportion in Python

I frequently predict proportions (e.g., proportion of year during which a customer is active). This is a regression task because the dependent variables is a float, but the dependent variable is bound between the 0 and 1. Googling around, I had a hard time finding the a good way to model this situation, so I’ve […]

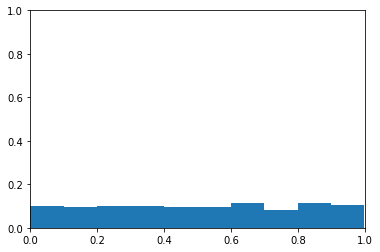

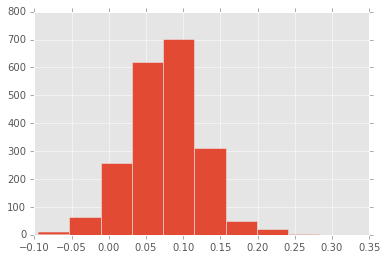

Exploring ROC Curves

I’ve always found ROC curves a little confusing. Particularly when it comes to ROC curves with imbalanced classes. This blog post is an exploration into receiver operating characteristic (i.e. ROC) curves and how they react to imbalanced classes. I start by loading the necessary libraries. 1 2 3 4 import numpy as np import matplotlib.pyplot […]

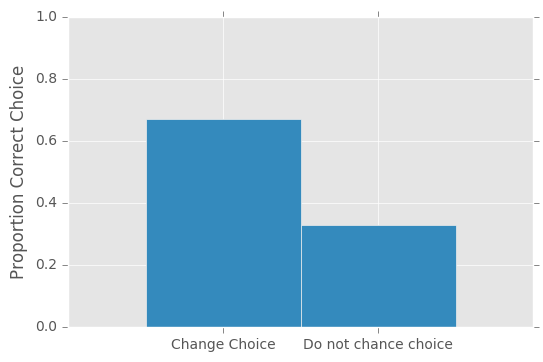

Simulating the Monty Hall Problem

I’ve been hearing about the Monty Hall problem for years and its never quite made sense to me, so I decided to program up a quick simulation. In the Monty Hall problem, there is a car behind one of three doors. There are goats behind the other two doors. The contestant picks one of the […]

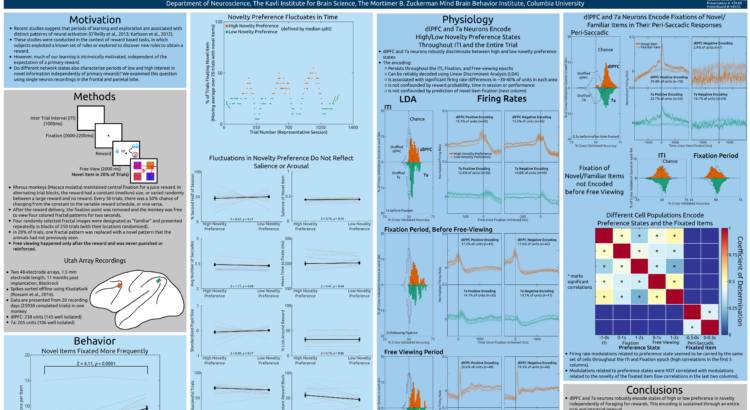

SFN 2016 Presentation

I recently presented at the annual meeting of the society for neuroscience, so I wanted to do a quick post describing my findings. The reinforcement learning literature postulates that we go in and out of exploratory states in order to learn about our environments and maximize the reward we gain in these environments. For example, […]

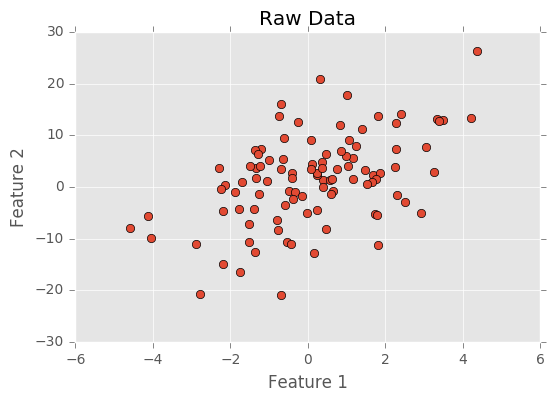

PCA Tutorial

Principal Component Analysis (PCA) is an important method for dimensionality reduction and data cleaning. I have used PCA in the past on this blog for estimating the latent variables that underlie player statistics. For example, I might have two features: average number of offensive rebounds and average number of defensive rebounds. The two features are […]

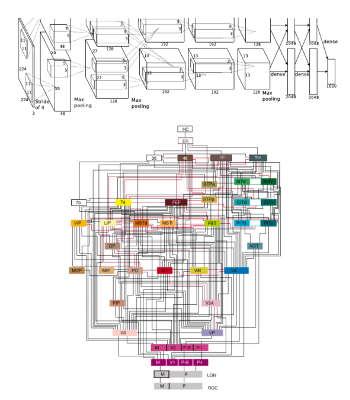

Attention in a Convolutional Neural Net

This summer I had the pleasure of attending the Brains, Minds, and Machines summer course at the Marine Biology Laboratory. While there, I saw cool research, met awesome scientists, and completed an independent project. In this blog post, I describe my project. In 2012, Krizhevsky et al. released a convolutional neural network that completely blew […]

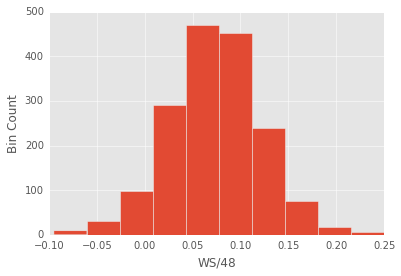

Revisting NBA Career Predictions From Rookie Performance…again

Now that the NBA season is done, we have complete data from this year’s NBA rookies. In the past I have tried to predict NBA rookies’ future performance using regression models. In this post I am again trying to predict rookies’ future performance, but now using using a classification approach. When using a classification approach, […]

Creating Videos of NBA Action With Sportsvu Data

All basketball teams have a camera system called SportVU installed in their arenas. These camera systems track players and the ball throughout a basketball game. The data produced by sportsvu camera systems used to be freely available on NBA.com, but was recently removed (I have no idea why). Luckily, the data for about 600 games […]

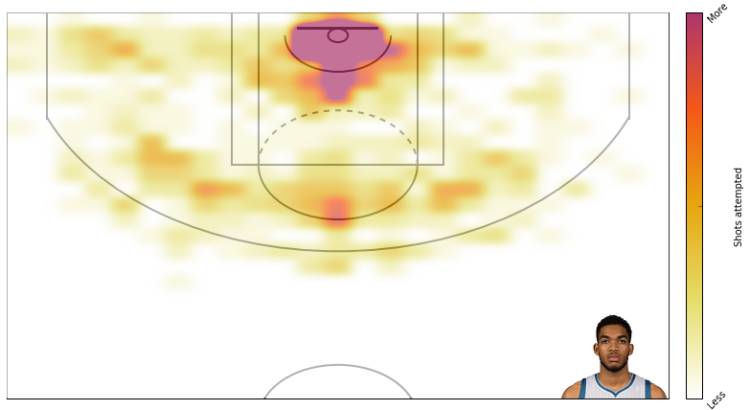

NBA Shot Charts: Updated

For some reason I recently got it in my head that I wanted to go back and create more NBA shot charts. My previous shotcharts used colored circles to depict the frequency and effectiveness of shots at different locations. This is an extremely efficient method of representing shooting profiles, but I thought it would be […]

An Introduction to Neural Networks: Part 2

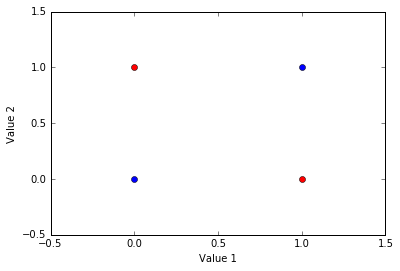

In a previous post, I described how to do backpropogation with a 2-layer neural network. I’ve written this post assuming some familiarity with the previous post. When first created, 2-layer neural networks brought about quite a bit of excitement, but this excitement quickly dissipated when researchers realized that 2-layer neural networks could only solve a […]

An Introduction to Neural Networks: Part 1

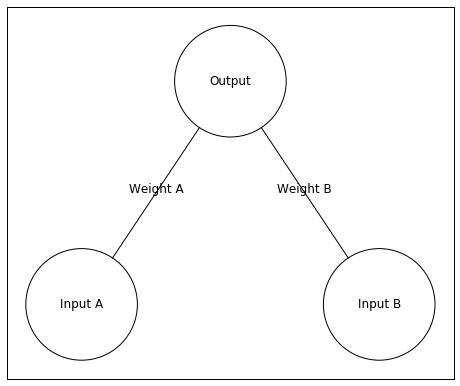

We use our most advanced technologies as metaphors for the brain: The industrial revolution inspired descriptions of the brain as mechanical. The telephone inspired descriptions of the brain as a telephone switchboard. The computer inspired descriptions of the brain as a computer. Recently, we have reached a point where our most advanced technologies – such […]

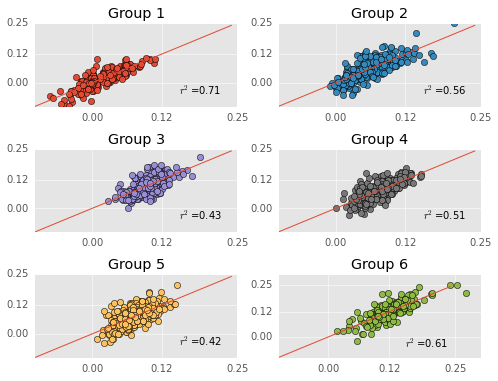

Revisiting NBA Career Predictions From Rookie Performance

In this post I wanted to do a quick follow up to a previous post about predicting career nba performance from rookie year data. After my previous post, I started to get a little worried about my career prediction model. Specifically, I started to wonder about whether my model was underfitting or overfitting the data. […]

Predicting Career Performance From Rookie Performance

As a huge t-wolves fan, I’ve been curious all year by what we can infer from Karl-Anthony Towns’ great rookie season. To answer this question, I’ve create a simple linear regression model that uses rookie year performance to predict career performance. Many have attempted to predict NBA players’ success via regression style approaches. Notable models […]